In a previous post, we established a vital distinction: the difference between an induced event (arising from human intervention) and a triggered one (the release of pre-existing tension in a system).

A rigorous distinction between triggered and induced processes can be formalized using the 100 prisoners problem, as discussed in both the algorithmic literature Gál and Miltersen (2007)) and expository mathematical sources Pahor (2024), or even as a riddle video.

While there are many variations and related paradoxes, this formulation is particularly revealing from a structural perspective: it produces one of the largest known gaps between a naive triggered interpretation and a structurally correct induced one, leading to dramatically different outcomes.

In its naive formulation:

- each participant acts independently

- joint success probability is: $ (1/2)^{100}$

- this corresponds to a triggered process:

- independent actions

- no interaction

- no structural dependence

The Induced Strategy Link to heading

The optimal solution relies on a cycle-following (loop) strategy:

- each participant follows a deterministic chain induced by a permutation

- the system self-organizes into cycles

The success condition becomes: all cycles have length ≤ 50. The resulting probability is approximately $ \approx 0.31$. This is not an improvement in estimation — it is a redefinition of the process.

The key structural insight is that:

- for $ n = 100 $ → probability ≈ 0.31

- for $ n \to \infty $ → probability remains ≈ 0.30 < 0.51

- whereas: naive strategy → $ (1/2)^n $ i.e. exponential decay

This leads to a fundamental conclusion: induced structures are dimensionally robust, while triggered processes degenerate under scale.

The cycle-following strategy has a local consistency property reminiscent of dynamic programming: after each drawer opening, the optimal continuation is simply to follow the induced pointer. But unlike classical Bellman optimality, this is not derived recursively — it is a consequence of the global combinatorial structure established by Curtin and Warshauer (2006) via Foata’s transformation.

Practical Implications: When Structure Matters Link to heading

The distinction is not theoretical — it appears whenever:

- outcomes depend on how a system organizes information

- not just on how events are generated

Two domains illustrate this clearly: physics and actuarial science. A recurring pattern appears across disciplines:

- models are simplified for tractability

- simplifications become habitual

- and eventually, they are mistaken for the underlying structure

This is where error begins — and ignorance takes root.

1. In Physics Link to heading

Describing gravity as a force is a useful simplification:

- it works in weak-field regimes

- it provides intuitive, computationally efficient results

But it is not fundamental. Gravity is not a force but a manifestation of geometry. Treating it otherwise is acceptable only as long as we remain aware of the approximation.

A concrete example is the Global Positioning System (GPS):

- satellite clocks experience different time flows due to both velocity (special relativity) and gravitational potential (general relativity)

- these effects accumulate to about 38 microseconds per day

If one were to rely purely on a Newtonian framework:

- time would be assumed absolute

- no relativistic correction would be applied

The consequence is not marginal: positioning errors would grow to several kilometers within a single day. In other words:

- Newtonian gravity works as a local approximation

- but the actual system (space-time geometry) is induced at a deeper level

Ignoring that structure does not just reduce precision — it leads to systematic divergence.

2. In Actuarial Practice Link to heading

The analogue is clear:

- estimating IBNR by LoB is often implemented through data truncation: when constructing a triangle for a given segment, only its own development is retained, while the rest of the portfolio is discarded

- it creates an illusion of granularity

- for example, the same claim may be initially reported under CASCO and later reallocated to RCA (or vice versa) following a reassessment of liability; if the claim is correctly evaluated from the outset (e.g., 10,000 estimated and 10,000 paid), then IBNR is, by construction, zero

- modeling LoBs independently breaks this invariance: the reclassification is misinterpreted as reserve deterioration in one segment and improvement in another, introducing artificial IBNR where none exists.

- same event can propagate to multiple segments, creating a dependence structure that is ignored when modeling segments independently

Data truncation—i.e., excluding information prior to modeling — is not conditioning: truncation discards part of the data, whereas conditioning requires a joint model in which no information is lost. This is not merely a matter of operational convenience—it is a change in the underlying process being modeled.

From a regulatory perspective, this distinction matters. Under Solvency II2, when using grouped policy data, it must be demonstrated that the combination of grouping and method does not distort the total value of technical provisions.

More generally, excluding data requires explicit justification and must not introduce bias in the valuation. In this context, constructing LoB-specific triangles by discarding information from other segments is not a neutral simplification—it is a modeling choice that may alter the aggregate reserve, unless its impact is explicitly controlled.

Conditioning only becomes meaningful if one starts from a global model and then derives LoB-specific views as conditional projections. In that case, no information is lost — the dependence structure is preserved.

The Semantic Rigor of Solvency II: Segment vs. Separate Link to heading

It is worth noting the precise language used in the Solvency II Directive. Article 80 dictates that undertakings shall segment their obligations into homogeneous risk groups, usually by Line of Business (LoB). Crucially, the directive does not say separate.

This distinction is not merely semantic; it is structural:

- To separate might imply data truncation (used for separating life and non-life).

- To segment implies output partitioning: modeling the joint underlying structure (the reporting system) and subsequently deriving granular, segment-specific conditional expectations.

In other words, the regulation demands that the end result be segmented, not that the data be separated. Interpreting segmentation as separation directly violates Article 19 (which prohibits the unjustified exclusion of information) and Article 34 (which forbids grouping methods that distort the aggregate best estimate). By cutting the data, the modeler destroys the induced dependence, transforming an induced process into a naive triggered one.

From Pricing to Reserving: Where the Process Changes Link to heading

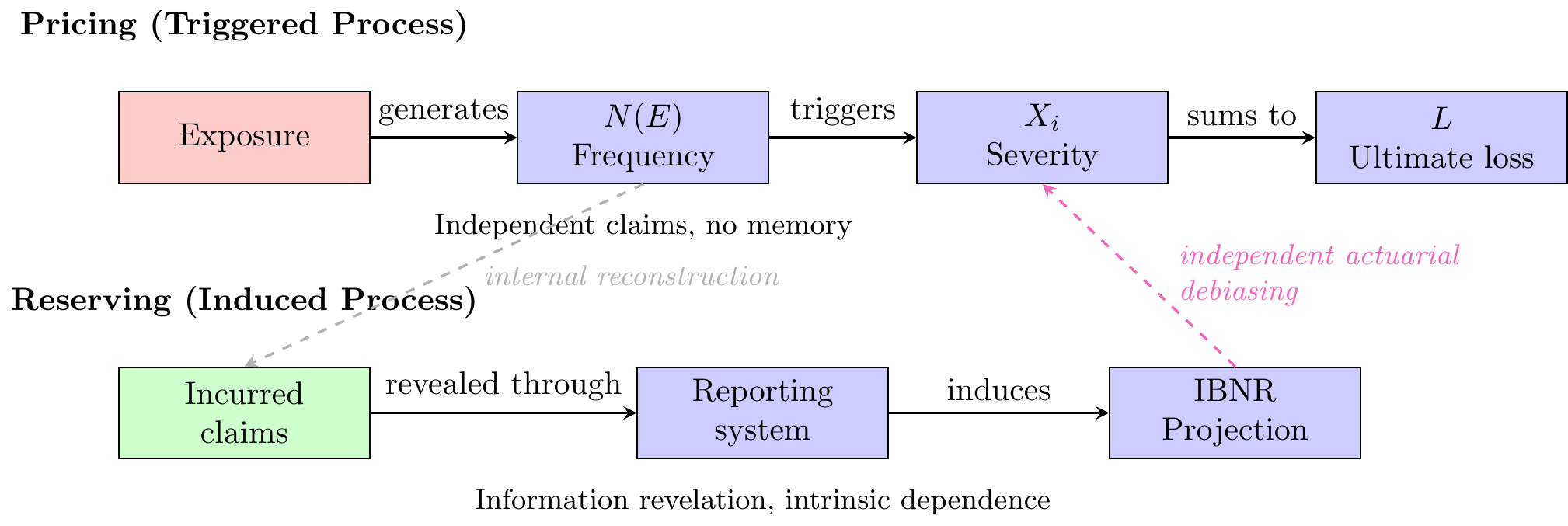

In pricing, aggregate loss is constructed hierarchically from first principles. We begin with exposure, which determines the opportunity for claims to occur. Conditional on exposure, we model claim frequency; conditional on claim occurrence, we then model claim severity.

Formally, aggregate loss is defined as

L = \sum_{i=1}^{N(E)} X_i

where:

- $ E $ denotes exposure,

- $ N(E)$ is the claim count process parameterized by, or conditional on, exposure,

- $ X_i$ are claim severities, conditional on a claim occurring.

Thus, the claim count process is not modeled independently: it is itself induced by the real exposure. The modeling structure is therefore hierarchical,

E \;\rightarrow\; N(E) \;\rightarrow\; X_i \;\rightarrow\; L. \tag{rcor}

Nevertheless, this remains a triggered process:

- claims are generated independently

- structure is imposed ex-ante

- the system is built from zero

The hierarchy describes the order of construction, not an internal systemic dependence. There is no memory across claims beyond what is explicitly modeled. Any dependence must be added deliberately.

Reserving (IBNR): an Induced System Link to heading

IBNR starts from a fundamentally different object: not from exposure, but from claims that have already occurred.

The uncertainty is no longer:

- whether claims will happen

but:

- how already occurred claims are revealed over time

This shifts the focus from $ N(E) $ to the reporting delay mechanism:

D = \text{reporting delay}

At this point, the process is no longer triggered — it is inferred.

- claims exist but are partially hidden

- information unfolds through a system

- dependence is embedded in that system

This is an induced process. RBNS is conditioned by the insurer’s informational and operational state and may over- or understate ultimate loss. IBNR is therefore not merely extrapolation, but an independent actuarial correction of insurer-conditioned bias.

Why This Difference Matters Link to heading

The transition from pricing to reserving is not incremental — it is structural.

|

Aspect |

Pricing |

Reserving (IBNR) |

|---|---|---|

|

Starting point |

Exposure |

Incurred claims |

|

Mechanism |

Claim generation |

Information revelation |

|

Process type |

Triggered |

Induced |

|

Dependence |

Optional (modeled) |

Intrinsic (system-driven) |

This explains a key inconsistency in standard practice: applying pricing logic (triggered) to reserving problems (induced). Estimating IBNR per LoB is often justified by increased granularity. Structurally, however: segmentation cuts information through segmentation itself.

More precisely:

- it breaks cross-segment dependence

- it fragments a common reporting-delay mechanism

- it replaces a single induced system with multiple artificial ones

This is equivalent to: breaking a single coherent loop into disconnected fragments.

Mixed Structures: A Hidden Instability Link to heading

A particularly dangerous case arises when:

- some LoB preserve structure

- others are fragmented or weakly modeled

This corresponds to a hybrid prisoners system:

- some follow the loop strategy

- others guess randomly

In such a hybrid system, even a small fraction of participants breaking the loop can cause the entire structure to collapse, as coherence is a global property, not a local average. The result is not a weighted average — it is structural degradation. Once the loop is broken, coherence cannot be recovered by aggregation.

The Core Confusion Link to heading

The standard actuarial approach implicitly assumes: IBNR is unobserved claims within the same process. This is incorrect. IBNR is not part of the triggering mechanism — it is a projection of the reporting system.

Treating it as triggered leads to:

- segmentation by LoB

- independent modeling assumptions

- artificial reconstruction via summation

All of these assume that the uncertainty is local to each segment. Once events are triggered:

- dependence no longer comes from $ N(E) $, breaking the hierarchical structure (rcor)

- it comes from how information propagates

This propagation is:

- system-wide

- correlated

- structurally induced

The Missing Link: Why RBNS Is Not Memoryless Link to heading

RBNS is often treated as if each claim were evaluated independently — implicitly assuming a memoryless structure.

This is incorrect in practice.

- claim handlers learn from previous cases

- models are calibrated on historical portfolios

- similar claims influence each other implicitly

Therefore: RBNS is not memoryless — it is already partially induced. This creates a tension:

- micro-level evaluation (RBNS) appears local

- but the information used is global

This creates a tension: RBNS evaluation appears local, but the information used is global. Therefore, even if IBNR modeling treats segments independently, the implicit cross-segment structure in RBNS already undermines that independence.

Structural Consequence Link to heading

Even if each LoB is modeled with precision:

- the global dependence is destroyed

- the induced mechanism disappears

- the system reverts toward a triggered-process approximation

This is directly analogous to abandoning the loop strategy and reverting to independent guessing. The key difference between pricing and reserving is not technical — it is ontological:

- pricing asks: what will be generated?

- reserving asks: what already exists but is not yet visible?

And that leads to the central conclusion:

IBNR is not generated by claims themselves alone, but by the informational structure governing their reporting, evaluation, and potential estimation errors. Therefore, modeling IBNR through data truncation is not a refinement of the estimate — it alters the very nature of the process being modeled.

Conclusion Link to heading

A recurring pattern appears across disciplines: models are simplified for tractability, simplifications become habitual, and eventually, they are mistaken for the underlying structure.

The implication for actuarial practice is clear: segmentation through data truncation when applied to IBNR, is not a refinement — it is a redefinition of the process being modeled. To preserve structural coherence, reserving models must either:

- begin from a global joint model and derive segment-specific projections as conditional views, or

- explicitly demonstrate that segmentation does not distort the aggregate reserve.

Otherwise, the result is not increased precision, but the replacement of an induced system with a collection of artificial triggered approximations — structurally analogous to abandoning the loop strategy in favor of independent guessing.

@online{Cornaciu2026Induced,

author = {Cornaciu, Valentin},

orcid = {0000-0001-9239-7145},

title = {The 100 Prisoners Problem: Induced vs. Triggered Processes in Risk Management},

year = {2026},

date = {2026-04-20},

url = {https://rcor.ro/posts/2026-03-30-the-100-prisoners-problem-induced-vs-triggered-processes-in-risk-management/},

abstract = {Using the 100 prisoners problem as a structural thought experiment, this

post distinguishes triggered processes, driven by independent actions, from

induced processes, generated by internal system structure. The framework is used to

highlight how dependence structures can fundamentally alter aggregate outcomes, with

applications to risk management and actuarial reserving. In particular, it

illustrates how ignoring induced structure may lead to severe underestimation of

collective risk, while exploiting it can reveal non-trivial stability phenomena

under aggregation.}

}

Curtin, Eugene, and Max Warshauer. 2006. “The Locker Puzzle.” The Mathematical Intelligencer 28 (1): 28–31. https://www.cl.cam.ac.uk/~gw104/Locker_Puzzle.pdf.

Gál, Anna, and Peter Bro Miltersen. 2007. “The Cell Probe Complexity of Succinct Data Structures.” Theoretical Computer Science 379 (3): 405–17. https://doi.org/10.1016/j.tcs.2007.02.041.

Pahor, Milan. 2024. “The 100 Prisoners Problem.” 2024. https://www.parabola.unsw.edu.au/sites/default/files/2024-09/vol60_no2_2.pdf.

-

50% is a universal joint-probability bound. ↩︎

-

Legal basis:

- Article 34(2) ofDelegated Regulation (EU) 2015/35: The actuarial and statistical methods shall be consistent with and make use of all relevant data available for the calculation of the best estimate.

- Article 34(3) of Delegated Regulation (EU) 2015/35: Where a calculation method is based on grouped data, the grouping of data shall be carried out in a manner that ensures that it does not distort the calculation of the best estimate.

- Article 80 of Directive 2009/138/EC: Insurance and reinsurance undertakings shall segment their insurance and reinsurance obligations into homogeneous risk groups, and as a minimum by lines of business.

- Article 19 of Delegated Regulation (EU) 2015/35: The data used in the calculation of technical provisions shall be considered exhaustive within the meaning of Article 82 of Directive 2009/138/EC if: […] (b) no information is excluded from use in the calculation of technical provisions without justification.